| Per leggere l’articolo tradotto in italiano clicca l’icona blu google translate (la quarta da sinistra in fondo all’articolo) . Per un uso professionale e/o di studio raccomandiamo di fare riferimento al testo originale. |

Fonte: Algoritmwatch.com che ringraziamo

Autore: Kilian-Vieth-Ditlmann

vieth-ditlmann@algorithmwatch.org

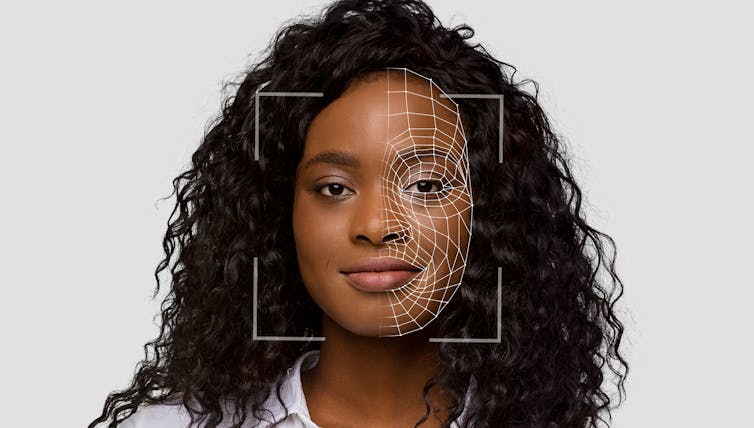

Automating administration processes promises efficiency. Yet the procedures often put vulnerable people at a disadvantage, as shown by a number of examples throughout Europe. We’ll explain why using automation systems in the domain of public administration can be especially problematic and how risks may be detected at an early stage.

In its coalition agreement, Germany’s Federal Government has declared its commitment to modernize governmental structures and processes. To realize this goal, it plans to deploy AI systems for automated decision-making (ADM) in public institutions. These are intended to automatically process tax reports and social welfare applications, to recognize fraud attempts, to create job placement profiles for unemployed clients, to support policing, and to answer citizens’ enquiries by using chat bots. At best, AI can relieve authorities and improve their service.

So far, so good. Some European countries are already far more advanced in their digitalization processes than Germany. Yet by looking at these countries, we can observe possible problems caused by automatization in this sector.

System errors

Soizic Pénicaud organized trainings for people in close contact with beneficiaries for the French welfare management. Once, such a case worker told her about people having trouble with the request handling system. The only advice she would give them was “there’s nothing you can do, it’s the algorithm’s fault.” Pénicaud, a digital rights researcher, was taken aback. Not only did she know that algorithmic systems could be held accountable, she also knew how to do it.

Fonte

Fonte